|

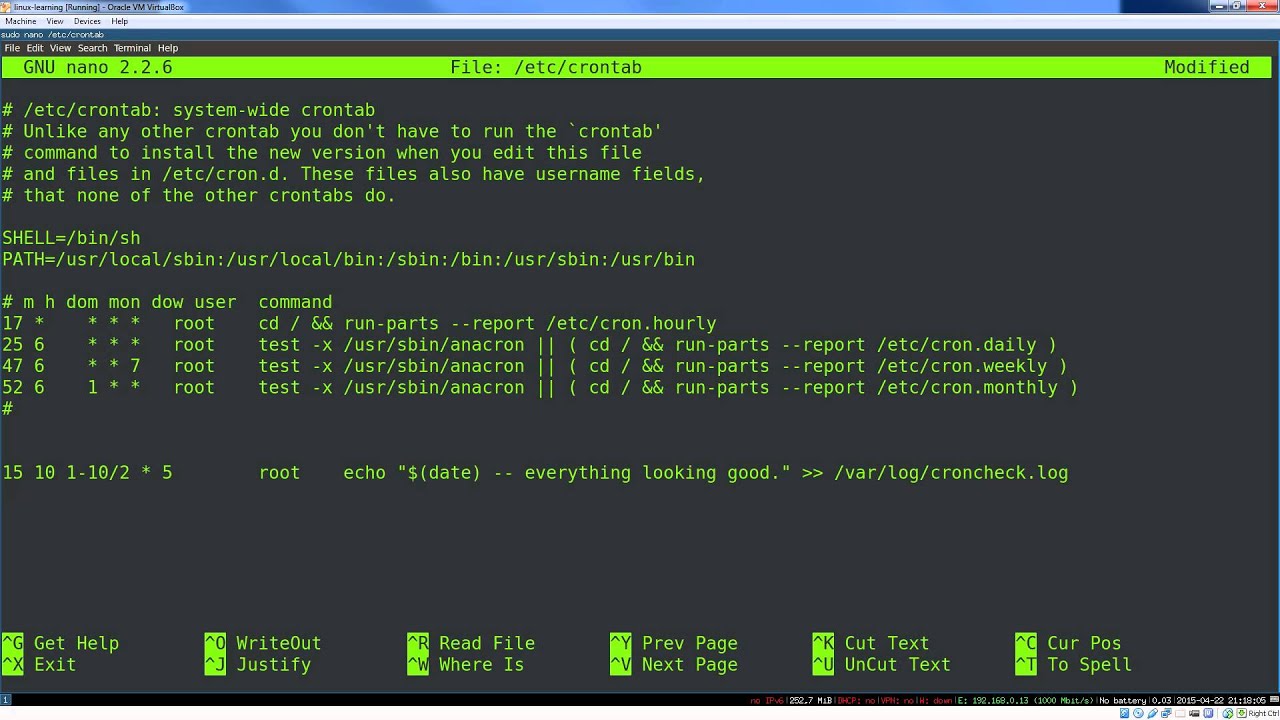

# THE REST OF THE SCRIPT GOES BELOW THIS POINT. It looks really weird, but it's very powerful! Simply add these lines to the top of any script and all stdout or stderr output thereafter will get automatically logged to the specified file, named after the script you are running itself! So, if your script is called some_cmd, then all output will get logged to $HOME/cronjob_logs/some_cmd.log: # See my ans: įULL_PATH_TO_SCRIPT="$(realpath "$.log") 2>&1Įcho "="Įcho "Running cronjob \"$FULL_PATH_TO_SCRIPT\""Įcho "Cmd: $0 "=" There are a variety of approaches, such as directing it to a file at call time, like this: # `crontab -e` entry which gets called every day at 2amĠ 2 * * * some_cmd > $HOME/cronjob_logs/some_cmd.log 2>&1īut, what if I want it to automatically log when I call it normally to test it, too? I'd like it to log every time I call some_cmd, and I'd still like it to show all output to the screen as well so that when I call the script manually to test it I still see all output! The best way I have come up with is this. They log only what their executables tell them to log.īut, what you can do is edit the call command as listed in your crontab -e file in such a way that it causes all output to get logged when you call it. Put this magic line at the top of any script, including inside your scripts or wrapper scripts which get called as cron jobs, in order to have all stdout and stderr output thereafter get automatically logged! exec >(tee -a "$HOME/cronjob_logs/my_log.log") 2>&1Ĭron jobs are just scripts that get called at a fixed time or interval by a scheduler. what I need right now is to learn how to How to easily log all output from any executable If you use bash and want bash to run a startup script, you have to define an environment variable "BASH_ENV=/path/to/my/startup/script" in your crontab before the line where you define the job. Note that shell scripts started from cron are considered non-login, non-interactive shells, so your standard shell startup scripts like. In those, especially when I'm initially writing and debugging them, I like to use bash's "set -vx" to make the unexpanded and expanded form of each line of the shell script get written to stdout before it gets executed. Most of my cron jobs invoke bash scripts I wrote specifically for the purpose of being started by cron for a particular reason. Then you could use your OS's built-in methods for configuring which kinds of messages get logged where, perhaps by editing /etc/nf. If you want your jobs to write to specific log files, you can use standard output redirection like suggested, or, supposing your cron job is a shell script, you could have your script call logger(1) or syslog(1) or whatever other command-line tool your OS provides for sending arbitrary messages to syslog. So one answer might just be to fire up your mailer daemon, and maybe make sure you have a ~/.forward file to forward your local mail along to your "real" email account.

So the cron job output emails just die in a local mail spool folder somewhere if they even get that far.

The thing is people don't always set up a mailer daemon (that is, a Mail Transfer Agent (MTA) like sendmail, qmail, or postfix) on most Unix-like OSes anymore. I forget what happens to system-wide (non-user-specific cron jobs from /etc/crontab). Most cron daemons on platforms I've worked with automatically email the stdout/stderr of user cron jobs to the user whose crontab the job came from.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed